…

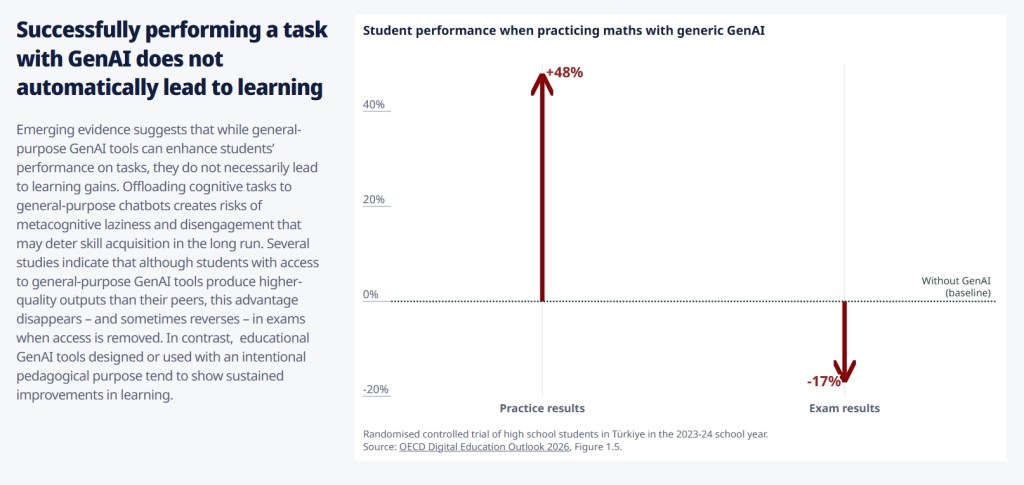

Note: In this randomised controlled trial, access to GPT-4 improved student performance when practising mathematics exercises – especially the tutoring version. However, during closed-book exams, students who had used the base (or general-purpose) version of GPT-4 performed 17% worse than those who never had access

…

Source: Bastani, H. O. Bastani, A. Sungu, H. Ge, O. Kabakcı and R. Mariman (2024), “Generative AI Can Harm Learning”, The Wharton School Research Paper, Available at SSRN: http://dx.doi.org/10.2139/ssrn.4895486.

SBG Summary: In this study, high school students were divided into two groups. One group was allowed to use AI to complete practice tasks, the other was not allowed to use AI for practice. Those who used AI did 48% better than those who could not use AI. Then the students took a test in which neither group could use AI. The group that used AI to practice now scored 17% less than those of the group that did not use AI to practice.

Original Source: https://www.oecd.org/en/publications/oecd-digital-education-outlook-2026_062a7394-en.html

Does the use of general-purpose tools automatically enhance students learning?

Gasevic and Yan (2026[25]) provide an overview of the emerging research on teaching and learning with GenAI and highlight that, depending on their use, GenAI tools can undermine or enhance learning. In some cases, the use of GenAI can be deceiving. For example, GenAI systems may enhance the apparent quality of student work (that is, their performance at educational tasks) without improving their actual learning (their knowledge and skill acquisition) – a paradox illustrated in several studies.

A study of 1,000 high school students in Türkiye in mathematics in grades 9, 10 and 11 examined three practice conditions across six 90-minute sessions: 1) with their course notes and textbook (as usual); 2) with a general-purpose LLM chatbot (“GPT base”); 3) with an educational LLM chatbot (“GPT tutor”). Educational LLMs are configured (or finetuned) to avoid the provision of direct answers and support learning (but there is no indication in this case that it was an adaptive learning tool). This randomised controlled trial analysed the results of the students during practice and noted that the percentage of correct answers to the exercises was much higher for the students using GenAI tools compared to those working by themselves, with a much higher performance for those using the educational chatbot. However, when their knowledge was assessed in a closed-book environment, the performance gains vanished: students who used the general-purpose GPT scored lower than those studying on their own (Bastani et al., 2024[26]) (Figure 1.5). The students who used the educational chatbot performed about as well as their self-study peers. While they enhanced their GenAI skills, one would expect effective digital learning tools to enhance learning, not merely practice performance, which hints to the possible under-performance of self-declared “educational” GenAI tools.

Other studies show similar results in different circumstances, although with less statistical power. Their purpose lies more in explaining why enhanced performance when using general-purpose tools does not necessarily lead to learning. Two studies analysed the metacognitive processes of Chinese higher education students using general-purpose LLMs to revise an essay they had written in English without using a GenAI tool. In a first randomised control trial (Fan et al., 2025[27]), students were assigned to revise their essay in 4 different ways: 1) alone; 2) with human expert advice; 3) using a check list (and digital tools); 4) with a general-purpose LLM. They found no statistically significant difference in motivation among the conditions, although the three groups with external support had slightly increased motivation. In terms of task performance, the group using the GenAI tool scored highest, but knowledge gains measured by a knowledge test did not improve. In terms of metacognitive processes or self-regulated learning, the group using GenAI performed less metacognitive tasks, especially evaluation and orientation. Another study compared differences between students who revised essays by asking for human advice or used a general-purpose LLM (Chen et al., 2025[28]). They found that those who interacted with human experts sought help in a linear way, following the models of “help seeking” theory: diagnosing what they need help with, asking for help, evaluating the help received, iterating, and then implementing the final help. They showed that when interacting with a general-purpose chatbot, some students tended to ask directly for help and implement immediately the solution received. They would often skip the diagnosis, evaluation and iteration stages. The authors refer to this as “metacognitive laziness”, a way of describing “cognitive offloading”.

Another study is worth mentioning as it casts neuroscientific light on the above findings (Kosmyna et al., 2025[29]). In the United States, students from 5 universities were asked to write a 20-minute essay under three working conditions: writing by themselves (“brain-only”), with a search engine, or with a general-purpose GenAI tool (ChatGPT). Afterwards, within one hour of the actual essay writing, only 12% of the LLM group could quote something from their essay (exact recall) as opposed to 89% in the two other groups. Even though the essays of the LLM group were well rated, the LLM group also had a lower ability to summarise their essay’s viewpoint, lower level of ownership, and more similar content across essays. Brain imagery suggested a shift in their executive tasks from generating content to supervising the AI-generative content, with lower neural connectivity and involvement. They also showed that writing alone first and then using the LLM preserved higher levels of activation and recall. In contrast, those who started with an LLM and then continued alone had low levels of activation and recall. These findings suggest that initial cognitive activation is crucial before using GenAI.

This evidence highlights that a proportion of students using general-purpose LLMs may take shortcuts, avoiding the productive struggle and cognitive effort needed for learning and durable knowledge and skill acquisition. This aligns with a “fast” rather than more productive “slow” and iterative use of GenAI, as argued by Beghetto (2026[30]), and the notion of “lazy” use of GenAI (Vinchon et al., 2023[31]).